My Scholars' Lab Talk About n-Dimensional Archives

A few days ago I had the good fortune to speak for a bit at Scholars’ Lab at University of Virginia. It was an actual event, with signs and people in attendance and everything! People attended for good reason—the other two speakers were Jerome McGann and Bethany Nowviskie. I wanted to hear them.

You see, just about everything I had to say is based entirely on McGann’s essay “Marking Text in Many Dimensions”, and Nowviskie’s experiences in the SpecLab with this piece of vaporware called the ‘Patacritical Demon. [You can read about some of those experiences in Johanna Drucker’s SpecLab: Digital Aesthetics and Projects in Speculative Computing.]

Some bits of my talk were also present in my Yale PDP talk, but not too much. The goal of both was the same: inspire movement and change.

[Throughout this text I’ll either place the slides I used or will provide off-site links to things for more context. Look for emphasized bracketed text like this. I should also note that I write talks like I speak, with pauses and breaks and such (which means comma splices at times, and other bad grammar).]

n-Dimensional Archives (or, What Do You Want the Future to Be?)

“The future echoes through our present so persistently that it is not merely a metaphor to say the future has arrived before it has begun.”

I’m using this Hayles quote out of context. “Computing the Human” is about imagined futures and understanding cyberculture, and we’re here to talk about creating and interacting with n-dimensional archives. But when taking the sentiment literally, it gives me a handy way to get to the challenge I put forth to all of you today: WHAT DO YOU WANT THE FUTURE TO BE?

In this case, what do you want future digital archives to look like, and how do you want to interact with them?

I have some ideas, as I’m sure all of you do, and my ideas are in no small part informed by the past—the traditional codex and the ways in which we interact with it, plus work done at this very institution—that has traditionally been ahead of its time and thus run into “technical limitations”—far more than it has any theoretical or conceptual limitations. But more than that, my grand vision of the archive consists of little more than pulling together all the technologies we have right in front of us, right here in the present, to make an awesome scholarly future.

“Technologies” doesn’t necessarily mean markup languages or server-side programming or superfast disk arrays that store terabytes upon terabytes of information. Technologies, as Martha Nell Smith reminds us, are simply the means by which we might accomplish various ends.

In her essay “Computing: What’s American Literary Study Got to Do With IT?”, Smith discusses four technologies that should be driving humanities computing. Bear in mind this essay is almost ten years old at this point, and we haven’t yet mastered more than one or two of these technologies, if even that.

First, the technology of access. This is the one we’re good at—making available to all what was once available to experts only, locked away in rare book rooms or libraries far from our students. Even the most static text-based archive can involve new readers with old texts, or produce different reading experiences than before.

Second, the technology of multimedia study objects or digital surrogates. Simply putting content online does not necessarily mean that object is a digital surrogate for the text. Ideally, what this particular technology will have brought about is, first, something to access. But more important, the creation of digital surrogates necessitates increased editorial responsibility and accountability with regards to material selections, encoding practices, and the representation of highly structured information that can expand, be built upon, and itself grow.

Third, the technology of collaboration. [I commented aside that we can now just refer to this as “Nowviskie Technology”] Embracing collaboration goes against traditional humanities training, but the means to accomplish ends in humanities computing projects must include a vast array of colleagues—managers, librarians, programmers, designers, visionaries, and so on. You might find a traditional literary scholar playing one or more of those roles. Then again, you might not. And you might find all of those roles in-house, or you might find yourself making new knowledge and new knowledge environments with the folks up the road, or across the continent, or across the ocean.

Finally, the technology of self-consciousness. While collaborating on a project to produce digital surrogates to increase access to texts, we must “maintain a relentless self-consciousness” of how the core objects of study—the ones we are remediating—have been produced both in the first place, and in their new spaces. Smith uses her experiences with Dickinson as an example, as she reminds us that “neither the reproductions of texts nor critical interpretations can be innocent of or superior to politics, since both require negotiations among authors, editors, publishers, and readers,” and ensuring those negotiations and interventions are reproduced in the digital archive is crucial to the reader’s understanding of the entire textual condition of the work. In other words, to represent Dickinson’s poetry simply as text on a screen, without constant awareness of its production history would be to miss the point—or at least the potential and power—that the digital archives affords us.

Using Smith’s four technologies as a guide, in the scholarly future that I foresee, digital archives move. For lack of a better word, they are alive and grow exponentially through external connections and user interactions. They are truly rhizomorphous.

That word has been bandied about before. Most notably (to me, anyway), was Ed Folsom’s use of “rhizomorphous” to describe the Whitman Archive in the October 2007 PMLA issue on remapping genre. Notable scholars [and here I pointed right at Jerry, obviously] were quick to point out in their response pieces that the reliance of the Whitman Archive and its technical design

[I use this screenshot just to show the static text plus image that populates the archive]

Whitman’s work itself may be rhizomorphous but the database and methods for user interaction are not. Instead of providing a space through which connections can be made, the reader is still limited by what has been placed in the archive, in the manner in which the editors placed it, accessible only through a rigid interface; there is no technical interface to internal or external connections between texts and paratexts.

It is beyond time that digital archives of texts begin to adhere to the methodologies learned from Web 2.0 activities and applications and embrace the concepts of layering shared data and user-generated or user-customized content onto the core curated data within the archive. To do so, we must closely examine the texts and paratexts that are physically present in a text-based archive, recognizing that these tangible elements are themselves already highly relational and overlap and crisscross in ways not easily seen, analyzing the whole of the work, and finally building up from the frameworks available in literary theory and the structures already present in texts.

In my imagined scholarly future, new knowledge environments means archives that move and grow, and to get there we need the technology of collaboration and the technology of self-consciousness far more than anything else. With those two technologies more than any others, we can actually build the ‘Patacritical Demon. And building with the Demon in mind should actually be the starting point for design work when creating an interactive archive of texts.

Briefly, and quoting entirely from specifications documents, the Demon is “a markup tool for allowing the reader to record and observe interpretive moves through a textual field. The reader marks what are judged to be meaningfully interesting places/moments in that spacetime field—the marks being keyed to a set of control dimensions […] and behavioral dimensions.”

In the behavioral dimensions, readers can mark words, how those words (or images) relate within the whole field, and note issues of transmission and reception—among other things.

[I showed a slide with the seven behavioral dimensions on them: linguistic, imagistic, documentary, graphical/auditional, semiotic, rhetorical, and social. You can read more about them at the end of “Marking Texts in Many Dimensions”.]

In the control dimensions, one could compare marks made in the linguistic dimension during one session against those made in another, or reevaluate the resonance of a mark made in some behavioral dimension when revisiting it in another session.

[I showed a slide with the three control dimensions on them: temporal, resonance, and connection. You can read more about them at the end of “Marking Texts in Many Dimensions”.]

A second site of interactivity would be to view the marks made by other readers of the same text. When I talked about this at the recent Yale PDP graduate symposium, an audience member said “does that mean I could see the marks Harold Bloom made on a text?” In a perfect world of puppies and rainbows and ponies for everyone, yes. Yes it does.

I can think of several different ways to implement this tool—as a standalone application, as a web application, as an interactivity layer on top of existing archives (like in a pluggable architecture like Omeka, if Omeka were designed to deal with encoded text).

The CATMA tool hints at being able to do some of what’s been described. This is a standalone Java application that allows you to load a text locally and make marks and analyze text. But this is not an archive; it’s a tool. In my future archive I want an interactivity layer built right in.

The concept of marking layers on top of text is really no different than some Web annotation applications such as Diigo which allow for marks by users to appear within the visual field of the document presented.

In practice, marking in many dimensions could be as simple as using the HTML5 <canvas> tag and the z-index property of the element containing the canvas; this property allows for stackable elements with varying degrees of transparency—one could effectively layer a user’s marks on top of each other and interact on the client-side to reveal or hide those marks as required, using client-side keypress or mouse actions to swap the visibility properties of the underlying markup elements. [So, literally we’re talking about marks here—just like you’d mark in a physical text. Here’s one example of an HTML5/JavaScript drawing tool. These marks are just (x,y) coordinates that can be stored.]

But more important to the discussion of the ‘Patacritical Demon as paradigm for archival interface is the necessity for the texts in the archive itself to be multifaceted, multidimensional, and in effect boundless.

Turning back to the PMLA cluster and Meredith McGill’s comments about the Whitman Archive, she challenges Folsom’s claim that Whitman Archive “permits readers to follow ‘the webbed roots’ of Whitman’s writing as they ‘zig and zag with everything'”—for the archive doesn’t do this. The texts are there, to be sure, but in no form other than a static digital reproduction of the book, important though that may be, with no interface that identifies, highlights, or in any way illuminates or stores these webs and zigs and zags any differently than the physical book, or with any apparatus that allows the user to mark their own path or paths.

McGill offers suggestions for encouraging the rhizomorphous connections that could be made when she says the archive should “provide hyperlinks to Whitman’s editorials or to his short fiction that is available through public-domain sites such as Making of America” and so on. These are good suggestions to be sure, but still antiquated in relation to how content can and is shared online. When McGill asks “what would it take to realize Folsom’s vision of a database that allows readers to follow Whitman’s writing as it ‘darts off in unexpected ways’?” I answer, that it’s not the database that allows this—it’s the interface to the database, plus the interface to someone else’s database. Instead of using an uncontextualized hyperlink to send the reader outside the archive, use application programming interfaces to bring the content inside. In other words, mashup the archive.

If you want an archive to be rhizomorphous, then implement the processes that can mine the data from other sources—on demand, as the user winds their way through scholarly work. With editorial (and programmatic) control over the APIs in use, the contextual and paratextual information that could be associated to the core archival texts through external means would truly allow the scholar to find and illuminate their own paths through and beyond the archive.

In some ways, this gets at the heart of the Open Annotation Collaboration, which recognizes the value of annotation as an aid to memory, an ability to add commentary and to classify, and as scholarly work in its own right. The goals of the project include the facilitation of a web-based interoperable annotation environment across clients, servers, and collections.

One of the OAC use cases describes making annotations which capture netchaining practices. In this example, the scholar’s research path—and in fact multiple scholars’ research paths—link together multiple sources within one environment.

Now think about a similar application or process within an archive of a single author’s work. Instead of (or in addition to) netchaining posthumous commentary, use textual analysis tools and literal marks in the behavioral and control dimensions to uncover elements of production. Archiving a text that effectually moves around a core curated data set, but pivots on collaborative connections and interfaces does not kill the book. Instead, it allows the book to grow to be as much of a force in the future as it is a “force in history”, invoking a sort of open system circuit model and adding the dimension of time and space into the mix—re-reading as others read, adding posthumous paratexts.

When I first mentioned my archive ideas to Bethany, in the discussion that caused her to invite me here in the first place, I told her about the particular content I work with—the work of John Muir. I described to her how I wanted to create an archive of Muir’s annotations of the 1906 edition of The Writings of Henry David Thoreau. If the Thoreau volumes served as the core text in a Demon-like setting, recreating Muir’s annotations would occur in many dimensions, and serve as a site for annotation themselves. Users such as myself would then be able to annotate Muir’s annotations, and link them back to the letters and other texts Muir wrote after marking up his Thoreau.

[What I didn’t talk about was the incredibly interesting temporal dimension that exists in Muir’s work. Had I started to mention it, I would have gone on for hours. Here are some brief bits written generally (I have a whole thesis on this stuff). I’ve taken this extra text out of italics because that would just be annoying.]

People often put Muir’s work on a clear path of influence from Emerson (Nature, 1836; Essays, First Series, 1841; etc) to Thoreau (Walden, 1854; “Walking”, 1862) to Muir, and that because of the influence of Ezra & Jeanne Carr in his life, he must have read certain texts at certain times. But that’s not necessarily the case. Certainly Emerson and Thoreau influenced Muir, but scholars have largely ignored the precise timeline when discussing a direct Emersonian or Thoreauvian influence on Muir’s spiritual beliefs and environmental philosophy. For instance, Muir’s three major autobiographical works cover the period from his childhood in the 1840s through 1868, his first year in California: The Story of My Boyhood and Youth, A Thousand-Mile Walk to the Gulf, and My First Summer in the Sierra. However, Muir did not begin to work on these books until he was in his fifties. The text and illustrations of these books were culled from journals kept during their respective periods, but even those journals were heavily edited by Muir, well after the original entries were made.

In his biography, The Young John Muir, Stephen Holmes reminds scholars to remain skeptical of Muir’s written portrayal of his feelings and his seeming adherence to philosophical principles; Muir’s original journals no longer exist, just his own edited versions. Thus, Holmes insists, stories such as Muir’s first entry into Yosemite, “including a dramatic, ecstatic ‘conversion’ and total reorientation of his life—must be understood not as his immediate, intuitive responses to Yosemite but rather as the self-conscious results of his later literary and philosophical development.” Similarly, when readers note what appear to be quotations or paraphrasing from Emerson or Thoreau, we must not assume the connection comes from the original event, but upon reflection. To understand Muir’s emotional and philosophical growth, and to pinpoint times of direct influence by Emerson and Thoreau, one must examine Muir’s letters—many of which became his first published essays. Although he struggled with authoring articles and books, for reasons which will be discussed later in this thesis, Muir had a fondness for letter-writing and the personal connection he could make via the post. On a more practical level, scholars have dated original letters from which one can make a direct connection between, say, Muir’s reading of Thoreau and a Thoreauvian set of descriptions of his surroundings. For instance, a letter dated May 29, 1870, finds Muir musing on the similarities of the woods he found in “Canada West” and those described in Thoreau’s The Maine Woods. Thus, we have specific evidence of the first time Muir read The Maine Woods: May of 1870 while sitting in Yosemite, thinking about a journey he had taken several years before. I’ll stop here, but like I said, I can go on for hours.

While Muir’s annotations of Thoreau’s work aren’t yet digitized, his drawings, correspondence, and journals are now available from the Holt-Atherton Special Collections at the University of the Pacific. This is a relatively new production, as 18 months ago I was still working off microfilm. I am thrilled to no end that I can access this archived material, but I am also disappointed at the missed opportunity: here we have another digitization project that used no more technology than the technology of access.

Working with Muir is a textual scholar’s dream, which is ironic because Muir hated producing text. Hated to write. Didn’t know how he could ever turn “dead bone heaps of words” into something that would entice visitors or raise awareness for conservation. Yet, Muir essentially wrote Yosemite National Park into existence. And that’s not something you could trace or mark through the static archive of documents now available—and I want to. Basically, I want to be able to fire up the Demon and mark the heck out of the social dimension of Muir’s texts, and ensure that as this text grows, posthumously, these reformations of the text make it into the archive as well.

[Here’s where I segue into a story of a text and its production and continual growth.]

Intent on using the reputation of the Century and the power of the press to their benefit, Muir and Robert Underwood Johnson created an elaborate plan to introduce Century readers to the need for federal designation of Yosemite Valley as a national park. Theirs was a symbiotic relationship; each man provided what the other could not. Muir lacked political connections in Washington and the temperament for ongoing lobbying within the city, and Johnson had no experience summiting peaks, walking along glaciers, or basking in the Range of Light, let alone writing about it. Author and editor forged an unbreakable bond as part of the literary production circuit.

Muir’s two essays “The Treasures of the Yosemite” and “Features of the Proposed Yosemite National Park” appeared in the August and September 1890 issues of the Century, respectively. In 1890, circulation of the Century was at its highest ever: over 200,000. The main elements of the production model were in place—author, editor, publisher, reader—and only the matter of the text remained.

But that was a difficult matter since Muir had difficulties with writing. So, Johnson fed him ideas both for subject matter and rhetoric. Johnson also solicited text from Easterners who had gone West, to add context to the Muir essays in print. Johnson’s letters to Muir, filled with suggestions and corrections, plus the additional essays published in the Century, should all be available in the archive, and could be through application programming interfaces and shared content. An interactivity layer on top of this content would allow marks to be made; the thickening of the text itself this becomes apparent.

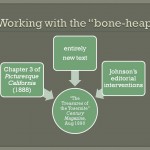

But the text of Muir’s essays themselves, in addition to Johnson’s editorial interventions, also contain bits and pieces of previous work.

[The three slides below show how these essays contained bits from other work, and then spawned other texts. Click each to embiggen.]

And still, there’s a rhizomatic production model to this text that sprouts new social connections as time passes and the context for reading as well as authoring the text both change. The two essays would eventually turn into a book, and as a book, The Yosemite continues to exist as a “thing-in-itself.” New production models of the author-editor-publisher-printer-reader relationship were solidified when blocks of text were buried within a large sheet of newspaper in the 1870s, printed on quality paper in the Century in 1890, filled the gilt-laden pages of The Yosemite in 1912, accompanied the black and white photographs of Ansel Adams in 1948, were reproduced on high-color glossy pages with photographs by Galen Rowell in 1989, and found their way into Ken Burns’s recently released television series The National Parks. At every turn, new technologies reshaped and enhanced Yosemit (the park and the book); digitization of the book itself, as well as its constituent parts, could allow the scholar to uncover its formulation in the first place. I want all of those parts in my archive, and I want to be able to mark them in many dimensions.

Think about an archive that doesn’t stop with the last digitized image and TEI encoded page pair that has been carefully curated and sits happily on a server; think about the connections or pathways that could unfold as you are able to mark the paths that you take through a text and its paratexts. Each path and set of connections brings about new knowledge, and the ability to mark those paths from session to session and compare not only the paths and the texts that unfold but the impetus behind following those paths, is the creation of a new knowledge environment.

Now think about integrating other tools into that application—tools from this very lab for collecting and annotating objects and comparing and collating textual works; all the work being done with on Neatline that enables visualization over time; a whole host of tools and data available through application programming interfaces. All of these processes either exist in parts or have clear developmental paths to their creation. So, WHAT ARE WE WAITING FOR?

And thus endeth my portion. This was intended as a segue to Jerry and Bethany, and in the podcast version that will be available, you’ll be able to hear their comments and discussions about the prototypes and processes they made in the SpecLab, how these marks and dimensions played with each other, and how this is still very much a viable idea (in other words, I’m not crazy!). When the podcast of this event is available, you will be able to hear their comments as well as audience comments and questions, and get a better sense of what this is all about. I mean, besides really cool stuff. I use Muir because I work on Muir—I could have just as easily swapped in Owen Wister or Frank Norris or the cadre of women authors in the post-Bret Harte era of the Overland Monthly, as I work on all of those things. The underlying principle remains: I want archives that move and grow and allow scholars to annotate, share, and discover new things in a way that a new medium/tool/platform/process allows.

new blog post: text, slides, links from my Scholars’ Lab talk on n-dimensional archives

This comment was originally posted on Twitter

RT @jcmeloni: new blog post: text, slides, links from my Scholars’ Lab talk on n-dimensional archives

This comment was originally posted on Twitter

RT @jcmeloni: new blog post: text, slides, links from my Scholars’ Lab talk on n-dimensional archives

This comment was originally posted on Twitter